The Utility of CBM Written Language Indices: An Investigation of Production-Dependent, Production-Independent, and Accurate-Production Scores

←

→

Page content transcription

If your browser does not render page correctly, please read the page content below

School Psychology Review,

2005, Volume 34, No. 1, pp. 27-44

The Utility of CBM Written Language Indices: An

Investigation of Production-Dependent, Production-

Independent, and Accurate-Production Scores

Jennifer Jewell

Woodford County Special Education Association

Christine Kerres Malecki

Northern Illinois University

Abstract. This study examined the utility of three categories of CBM written lan-

guage indices including production-dependent indices (Total Words Written, Words

Spelled Correctly, and Correct Writing Sequences), production-independent indi-

ces (Percentage of Words Spelled Correctly and Percentage of Correct Writing

Sequences), and an accurate-production indicator (Correct Minus Incorrect Writ-

ing Sequences) and was designed to answer three research questions. First, how

do these categories of CBM written language scores relate to criterion measures,

thus providing evidence for their valid use in assessing written language? Second,

how do the three categories of CBM written language scores compare to one an-

other across grade levels? Finally, are there gender differences in CBM writing

scores? Predictions were tested using a sample of 203 second-, fourth-, and sixth-

grade students from an Illinois school district. Results indicated grade level differ-

ences in how measures of written language related to students’ scores on a pub-

lished standardized achievement test, their Language Arts grade, and an analytic

rating. Specifically, with older students, the production-independent and accurate-

production measures were more related to standardized achievement scores, an

analytic rating, and classroom grades than measures of writing fluency. Implica-

tions were made regarding the appropriateness of using each type of CBM written

language index for different age levels, gender, and assessment purposes.

Curriculum-based measurement (CBM) (Shinn, 1995) to gather information for instruc-

is an established procedure used to directly tional decision making (Deno, Fuchs, Marston,

measure student performance in the general & Shin, 2001; Fuchs, Fuchs, & Hamlett, 1990),

education curriculum. CBM can be used to eligibility decisions (Fuchs & Fuchs, 1997;

create norms, measure students’ achievement, Shinn, Ysseldyke, Deno, & Tindal, 1982),

and monitor progress in the academic areas of progress monitoring (Deno et al., 2001;

reading, written expression, spelling, and math- Marston, Fuchs, & Deno, 1986), and other al-

ematics. An increasing number of educators ternative uses (Deno et al., 2001; Elliott &

and support personnel are turning toward the Fuchs, 1997; Espin et al., 2000). The current

use of CBM within a problem-solving process study defines CBM (focusing on written lan-

Correspondence concerning this article should be addressed to Christine Malecki, PhD, Northern Illinois

University, Department of Psychology, DeKalb, IL 60115; E-mail: cmalecki@niu.edu.

Copyright 2005 by the National Association of School Psychologists, ISSN 0279-6015

27School Psychology Review, 2005, Volume 34, No. 1

guage) as a standardized, short-duration flu- within the context of the phrase to a native

ency measure of students’ writing skills gath- speaker of the English language” (p. 11). CWS

ered in the context of Shinn’s (1995) problem- not only addresses fluency, as in Total Words

solving model. Written, or a single skill, as in Words Spelled

Correctly, but many components of written

Research on the Reliability and Validity expression, such as grammar, capitalization,

of CBM Writing Measures punctuation, and spelling. Videen et al. (1982)

The reliability and validity evidence for found that a high percentage of interrater agree-

uses of CBM in reading and mathematics is ment was attained when scoring for CWS, and

well-documented (Fuchs & Fuchs, 1997; that the average number of CWS in students’

Marston, 1989; Tindal & Parker, 1989). How- writing samples more than doubled from third

ever, there is a need for more research on the to sixth grade. The strongest correlations were

reliability and validity of CBM in the area of found between CWS and Words Spelled Cor-

written language. Previous research has exam- rectly (r = .92), Total Words Written (r = .91),

ined the reliability and validity evidence for holistic ratings (r = .85), and the raw score to-

three types of CBM writing indices; produc- tal on the Test of Written Language (TOWL; r

tion-dependent indices, production-indepen- = .69). This study provided evidence for the

dent indices, and an accurate-production index. reliability and validity of counting the number

Research investigating the reliability and va- of Correct Writing Sequences to assess student

lidity of these three types of CBM writing in- proficiency in written expression.

dices is summarized below. Production-independent (accuracy)

Production-dependent (fluency) in- indices. Production-independent indices such

dices. Production-dependent indices are mea- as Percentage of Words Spelled Correctly are

sures of writing fluency because they depend “percentage indices” and measures of writing

upon how much a student writes. Deno, Mirkin, accuracy because they are independent of the

and Marston (1980) and Deno, Marston, and length of the writing sample. Tindal and Parker

Mirkin (1982) found that Total Words Writ- (1989) examined a number of writing and spell-

ten, Words Spelled Correctly, Correct Let- ing indices; however, only three indices repre-

ter Sequences, and Mature Words were all sent production-independent (accuracy) mea-

significantly correlated with written lan- sures because the scores are independent of the

guage criterion measures such as the Test length of the writing sample. Percentage of

of Written Language (Hammill & Larsen, Legible Words, Percentage of Words Correctly

1978) and the Stanford Achievement Test Spelled, and Percentage of Words Correctly

(Madden, Gardner, Rudman, Karlsen, & Sequenced communicate the level of accuracy

Merwin, 1978; r = .41 to .88). These studies of the writing rather than just the amount of,

provided evidence that these scoring measures or fluency of the writing.

were closely related to other commonly ac- The results of the Tindal and Parker

cepted outcome measures. Additionally, (1989) study indicated that the three produc-

Marston and Deno (1981) found strong evi- tion-dependent indices were only weakly cor-

dence for the reliability of Total Words Writ- related to teachers’ holistic ratings of middle

ten, Words Spelled Correctly, and Correct Let- school students’ writing (rs = .10, .24, and .31,

ter Sequences. Specifically, the test-retest re- respectively). The two measures of Correct

liability coefficients, parallel-test correlations, Writing Sequences and the Percentage of Leg-

split-half reliability coefficients, and interrater ible Words were moderately related to holistic

correlations were all moderate to high (r = .57 ratings (rs = .45 and .42, respectively). The two

to .99). Videen, Deno, and Marston (1982) remaining production-independent measures

were the first to examine “Correct Writing (Percentage of Words Correctly Spelled and

Sequences” (CWS) defined as “two adjacent, Percentage of Words Correctly Sequenced)

correctly spelled words that are acceptable were very strongly correlated to the holistic

28CBM Written Language

ratings (rs = .73 and .75), providing evidence municating results and making comparisons

for their valid use in evaluating students’ writ- between students on the accuracy of writing,

ing skills. production-independent indices (percentage

Accurate-production indices. The ac- scores) may be most understandable to teach-

curate-production index (Correct Minus Incor- ers, parents, and the students themselves. How-

rect Writing Sequences) is a measure of both ever, the production-independent indices do not

writing fluency and accuracy. A recent study communicate any information about the

by Espin and colleagues (2000) revealed a rela- amount of writing a student has produced. Fi-

tively new indicator for use in CBM written nally, research has examined the issue of us-

language assessment: Correct Minus Incorrect ing various CBM written language scoring in-

Writing Sequences (CMIWS). Using a sample dices for making eligibility decisions (e.g.,

of sixth and seventh grade students, they found Parker, Tindal, & Hasbrouck, 1991; Watkinson

internal consistency coefficients ranging from & Lee, 1992). The results of the Watkinson and

.72 to .78 for CMIWS. As evidence for valid- Lee (1992) and Parker et al. (1991) studies

ity, Espin et al. compared CMIWS scores to support the use of accuracy-based (production-

teachers’ ratings of student writing proficiency independent) curriculum-based writing mea-

and found moderately strong correlations when sures to distinguish between students in regu-

examining 3-minute writing samples on story lar and special education, particularly for sec-

writing (r = .66) and descriptive writing ondary level students.

samples (r = .67). Correlations between the A second consideration when determin-

CMIWS and students’ district writing test ing what index is most valid for a particular

scores were identical (r = .69) when looking use may be the age of the students. Deno and

at descriptive and story writing CBM samples. colleagues (1980, 1982) and Videen et al.

These results suggest that the CMIWS index (1982) found results indicating that production-

may be a valid score to use, particularly with dependent indices are reliable and valid mea-

middle school students. sures in assessing elementary school children’s

writing skills. Tindal and Parker (1989),

Summary of Research Findings Watkinson and Lee (1992), and Parker et al.

(1991) found that measures of writing accu-

Some limitations are evident in the afore- racy (production-independent) were more ap-

mentioned studies such as only one or two cri- propriate measures of older students’ writing

terion measures being used, and those mea- skills (e.g., sixth through eighth grades) than

sures represented either direct or indirect writ- measures of writing fluency.

ing measures (Marston, 1989; Moran, 1987; Another factor that may need to be taken

Stiggins, 1982) and not both. The research pre- into consideration when deciding upon which

sented above also indicates that there is evi- CBM scoring index to use is the student’s gen-

dence for the reliability of all three categories der. Within the CBM reading literature, re-

of CBM written language scores. Furthermore, search results have been mixed as to whether

there is adequate validity evidence for the de- gender contributes to differences in students’

signed purposes of the scores. The task that performance. A study by Kranzler, Miller, and

remains is to determine for what specific use(s) Jordan (1999) suggested that fifth-grade stu-

and for whom each index is suitable. In prac- dents’ reading performance differed signifi-

tice, each type of scoring index may be more cantly between boys and girls. However, con-

appropriate to use for certain assessment pur- tradictory evidence was presented by Knoff

poses, or with students of certain age and gen- and Dean (1994), in which first-grade students’

der. For example, during individual student scores showed significant gender differences,

progress monitoring, production-dependent but older students’ scores did not. Similar stud-

measures, or the relatively new accurate-pro- ies have not been conducted in the CBM area

duction measure (i.e., CMIWS) may be best of written expression. Given the implications

for communicating student progress. For com- that these findings might have regarding nor-

29School Psychology Review, 2005, Volume 34, No. 1

mative data and decision making for boys and pendent scores (Total Words Written, Words

girls in the area of written language, further Spelled Correctly, and Correct Writing Se-

research in this area is needed. quences) based on the work of Tindal and

Parker (1989) and Espin et al. (2000). Finally,

Research Questions and Predictions it was predicted that gender differences would

The present study attempts to add to the be found on some of the CBM measures with

literature on CBM in the area of written lan- girls outperforming boys (Knoff & Dean, 1994;

guage. Specifically, the present study is an in- Kranzler et al., 1999; Malecki & Jewell, 2003).

vestigation of the utility of three categories of Method

CBM written language indices including pro-

duction-dependent indices (Total Words Writ- Participants

ten, Words Spelled Correctly, Correct Writing

Sequences), production-independent indices The participants in this study were 203

(Percentage of Words Spelled Correctly and second- (n = 87), fourth- (n = 59), and sixth-

Percentage of Correct Writing Sequences), and grade (n = 57) students from three schools in

an accurate-production indicator (Correct one rural northern Illinois school district. The

Minus Incorrect Writing Sequences). This in- schools were recruited by contacting the prin-

vestigation extends the literature by compar- cipals and giving them a brief description of

ing students’ CBM scores to both direct (ana- the study’s procedure. Participation by the stu-

lytical scoring) and indirect criterion measures dents was voluntary and required parental con-

of writing ability (standardized achievement sent.

test, and Language Arts grades) and by inves- The three schools consisted of an early

tigating students’ scores from three grade lev- elementary, an elementary, and a middle

els. The present study was designed to answer school. The sample comprised 44% males (n

the following three research questions: (a) How = 90) and 56% females (n = 113). In addition,

do these categories of CBM written language 6.4% of the sample was enrolled in special

scores relate to criterion measures, thus pro- education (n = 13). Of the 13 students in spe-

viding evidence for validity in assessing writ- cial education, 8 were male and 5 were female,

ten language? (b) How do the three categories 5 were receiving services for a learning dis-

of CBM written language scores compare to ability in reading, 3 for a learning disability in

one another across grade levels? and (c) What writing, 4 for a learning disability in math, and

gender differences are there in the three cat- 6 were receiving speech/language services. The

egories of CBM scores? racial make-up of the participating school dis-

It was predicted that adequate validity trict was 94% Caucasian, 5% Hispanic, and

evidence would be obtained for all six CBM 1% other (African American or Asian). Within

scoring indices in the form of moderate to the school district, 11% of the families were

strong correlations between scores on curricu- classified as low income and 89% as non-low

lum-based measures, the Tindal and Hasbrouck income. Furthermore, 97% of the district was

(1991) Analytic Scoring System (THASS), the English speaking and 3% had limited English

Stanford Achievement Test (SAT; Harcourt proficiency. Racial and SES data were not

Brace Educational Measurement, 1997), and available for the participating students.

students’ Language Arts classroom grades. It Measures

was also predicted that scores on the two pro-

duction-independent measures (Percentage of The measures included in this study were

Words Spelled Correctly and Percentage of a curriculum-based measure of written expres-

Correct Writing Sequences) and the accurate- sion, an analytic scoring system, the Stanford

production measure (Correct Minus Incorrect Achievement Test (SAT; Harcourt Brace Edu-

Writing Sequences) would more closely relate cational Measurement, 1997), and students’

to scores on the SAT, THASS, and the students’ classroom Language Arts grades from the fall

Language Arts grades than the production-de- semester.

30CBM Written Language

Curriculum-based measures (CBM) Stanford Achievement Test (SAT;

in written expression. One 3-minute CBM Harcourt Brace Educational Measure-

writing probe was collected from participat- ment, 1997). The SAT measures school

ing students. The students were given a lined achievement for students in Kindergarten

sheet of paper with a story starter typed at through Grade 12. The SAT is a paper-and-

the top. Per typical CBM writing probe pro- pencil test in multiple-choice format. The stan-

cedures the students were given 1-minute dardized battery consists of 11 different

to think about the story starter and 3 min- subtests including: Total Reading, Word Study

utes to write. The writing samples were Skills, Word Reading, Reading Comprehen-

scored using the following six procedures: sion, Total Math, Problem Solving, Math Pro-

Total Words Written (TWW), Words Spelled cedures, Language, Spelling, Environment, and

Correctly (WSC), Correct Writing Se- Listening.

quences (CWS), Percentage of Words For the present study, there were two

Spelled Correctly (%WSC), Percentage of particular subtests of interest within the SAT,

Correct Writing Sequences (%CWS), and Language and Spelling. The Language

Correct Minus Incorrect Writing Sequences subtest measures proficiency in mechanics

(CMIWS). Table 1 provides the definitions of and expression. This subtest also looks at

each procedure. content, organization, and sentence structure.

Tindal and Hasbrouck analytic scor- The Spelling subtest requires students to iden-

ing system (THASS). An analytic scoring tify the correct spelling of words. All analyses

system described by Tindal and Hasbrouck including these two subtests used their National

(1991) was used as an external criterion mea- Normal Curve Equivalent (NCE) scores based

sure of students’ writing abilities. Using this on grade level.

system, students’ writing samples are scored Language Arts grade. Students’ quar-

on three dimensions of writing: story-idea, or- terly report card grades for Language Arts were

ganization-cohesion, and conventions-mechan- recorded for the fourth- and sixth-grade stu-

ics. On each dimension, a scoring rubric is used dents. The second-grade students did not re-

to rate students’ writing samples on a scale ceive letter grades and therefore were excluded

from 1 to 5, with a “5” representing the high- from any analyses using letter grades. The let-

est quality. According to Tindal and Hasbrouck ter grades from the students’ report cards were

(1991), story-idea represents the “extent to converted to a 4-point scale. Students’ grades

which the composition has a cogent plot and a

included plusses and minuses; however, any

theme that is unique and captures the reader’s

“A” (A+, A, or A-) was converted to a 4.0, any

interest” (p. 238). Organization-cohesion ad-

“B” to a 3.0, and so on. In order to best reflect

dresses the “degree to which the story has an

students’ grades during the time the CBM

overall structure and progresses systematically,

samples were collected, the first and second

with well-defined transitions” (p. 238). Finally,

quarter grades were averaged to form a “fall

conventions-mechanics focuses on “syntax,

sentence structure, grammar, spelling, and semester” grade.

handwriting” (p. 238). Specific guidelines Procedure

for rating a writing sample on these three

dimensions using a 5-point scale can be Each school was sent copies of the cur-

found in Tindal and Hasbrouck (1991). This riculum-based writing probes to be distributed

particular rating system was chosen for use to the participants. The teachers in the students’

in the present study because of its similar- primary classrooms administered the curricu-

ity to state-wide standardized writing assess- lum-based measures. Verbally and in writing,

ment techniques. Interrater reliability coef- the teachers were provided with specific oral

ficients for the three writing components and written directions for proper administra-

within the THASS have ranged from .73 to .79 tion of the writing probes. First, the teachers

(Tindal & Parker, 1991). handed out a single sheet of lined paper to each

3132

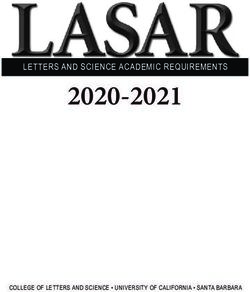

Table 1

Scoring Procedures, Definitions, and Computations

Index Definition Computation

Production-Dependent Indices

Total Words Written TWW A count of the number of words written. A word is TWW

defined as any letter or group of letters separated by

a space, even if the word is misspelled or is a

nonsense word.

Words Spelled Correctly WSC A count of the number of words that are spelled WSC

correctly. A word is spelled correctly if it can stand

alone as a word in the English language.

Correct Writing Sequences CWS A count of the correct writing sequences found in the

School Psychology Review, 2005, Volume 34, No. 1

CWS or

sample. A correct writing sequence is defined as two Possible Writing Sequences minus

adjacent writing units (i.e., word-word or word-punctuation) Incorrect Writing Sequences

that are acceptable within the context of what is written.

Correct writing sequences take into account correct spelling,

grammar, punctuation, capitalization, syntax, and semantics.

Production-Dependent Composite PDC Average of students’ raw scores on the measures of TWW, . (TWW + WSC + CWS) / 3

WSC, and CWS

Production-Independent Indices

Percentage of Words Spelled %WSC The percentage of words in the sample that are spelled correctly. WSC/TWW

Correctly

Percentage of Correct Writing %CWS The percentage of correct writing sequences in the sample. CWS/Possible Writing Sequences

Sequences

Production-Independent Composite PIC Average of students’ scores on the measures of %WSC (%WSC + %CWS) / 2

and %CWS.

(Table 1 continues)(Table 1 continued)

Index Definition Computation

Accurate Production Index

Correct Minus Incorrect Writing CMIWS This measure subtracts the number of incorrect writing CWS–Incorrect Writing Sequences

Sequences sequences in the sample from the total number of correct or CWS– (Possible Writing

writing sequences. The number of incorrect writing sequences Sequences– CWS)

was calculated by subtracting the number of correct writing

sequences in the sample from the total number of possible

writing sequences.

Criterion Measure in Current Study

THASSS Writing samples are scored on three dimensions; story-idea, Story-idea + Organization-Cohesion

organization-cohesion, and conventions-mechanics, each on + Conventions-Mechanics

a 5-point scale. A single THASS score was calculated by

summing students’ scores for story-idea, organization-cohesion,

and conventions-mechanics.

CBM Written Language

33School Psychology Review, 2005, Volume 34, No. 1

participant that had the story starter, “Write a ing indices. The coefficients were extremely

story about your talking dog.” typed at the top. high (all above .98) for Total Words Written,

Next, the teacher read aloud the following di- Words Spelled Correctly, and Correct Writing

rections: Sequences. Once it was known that interrater

You are going to write a story. First I will reliability was more than adequate, the group

read the story starter at the top of your page of raters scored the writing samples for the

and you will write a story about it. You will present study. Regarding the THASS, two re-

have one minute to think about what you will

write, and three minutes to write your story. searchers scored the writing samples (one-third

Remember to do your best work. If you don’t of the samples were scored by both raters). The

know how to spell a word, you should guess. interrater reliability coefficients between the

Are there any questions? (Pause – respond two raters ranged from .78 to .88 on the three

to questions.) Put your pencils down and lis-

ten. For the next minute, think about… (in- subscale scores and was .88 for the Total score.

sert story starter and let the students think For scores that did not match, a consensus score

for one minute). Now please begin writing. was determined by the two raters and that score

(Students write for three minutes.) Please was used in the final database.

stop and put your pencils down.

After administration, the writing samples Analyses

for each student were masked (any identifying

information was removed) and scored on the To test predictions, analyses included: (a)

five CBM scoring indices and the THASS scor- conducting MANOVAs to investigate grade

ing system. level and gender differences in scores; (b) com-

All students took the Stanford Achieve- puting correlations between scores on the Lan-

ment Test in the same month as the curricu- guage and Spelling subtests of the SAT, stu-

lum-based measure. This test was given over dents’ Language Arts grades, scores on the

the course of 6 school days. Testing times Tindal and Hasbrouck (1991) Analytic Scor-

ranged from 50 to 80 minutes on those days. ing System (THASS), and scores on the cur-

The test was administered by the classroom riculum-based measures TWW, WSC, CWS,

teachers and took place in the students’ regu- CMIWS, %WSC, %CWS, PDC, and PIC; and

lar classrooms. The students marked their (c) conducting regression analyses in which

answers to the multiple-choice questions in students’ scores on the PDC, PIC, and CMIWS

test booklets. The testing materials were then and students’ grade level were used as predic-

sent back to the publisher to be scored by com- tors of students’ scores on the analytic scoring

puter. system (THASS). Overall, due to the number

One graduate and seven undergraduate of analyses conducted and a desire to reduce

students were taught the proper procedure for Type I error, a more conservative critical value

scoring the writing samples using the measures (p < .01) was set as the criterion for signifi-

described above. This group of students then cance for all analyses.

practiced scoring on 20 writing samples per Results

grade for Grades 1-8 (160 samples). Follow-

ing their scoring, interrater reliabilities were The predictions investigated in this study

calculated for the scoring indices of Total were tested using a series of MANOVAs, cor-

Words Written, Words Spelled Correctly, and relational, and regression analyses. The de-

Correct Writing Sequences. These indices were scriptive results including the students’ perfor-

based on scores obtained from scoring entire mance on the six curriculum-based measures

writing passages. Reliability coefficients were (TWW, WSC, CWS, CMIWS, %WSC, and

not calculated for Correct Minus Incorrect %CWS), the CBM composites (production-

Writing Sequences, Percentage of Words dependent and production-independent), the

Spelled Correctly, or Percentage of Correct SAT Language and Spelling subtest scores, fall

Writing Sequences because each of these scor- semester Language Arts grades, and Total

ing indices is imbedded in the other three scor- THASS scores are presented in Table 2.

34Table 2

Descriptive Data on CBM Scores by Grade and Gender, and Stanford Achievement Test Scores, Language Arts Grades,

and Analytic Scoring System Scores by Grade

Grade 2 Grade 4 Grade 6 Boys Girls

M (SD) N M (SD) N M (SD) N M (SD) N M (SD) N

Total Words Written 24.60 (9.16) 87 39.14 (11.38) 59 43.67 (12.96) 57 30.90 (12.83) 90 36.79 (14.11) 113

Words Spelled Correctly 20.83 (8.61) 87 36.80 (11.32) 59 41.86 (12.56) 57 28.10 (12.77) 90 33.98 (14.66) 113

Correct Writing Sequences 15.61 (9.02) 87 34.56 (12.30) 59 40.79 (13.80) 57 25.48 (14.21) 90 30.35 (17.04) 113

Production-Dependent Composite 20.34 (8.46) 87 36.83 (11.40) 59 42.11 (12.62) 57 28.16 (13.02) 90 33.71 (14.91) 113

% Words Spelled Correctly 84.25 (10.20) 86 93.66 (5.24) 59 95.90 (4.32) 56 89.83 (9.21) 88 90.60 (9.26) 113

% Correct Writing Sequences 59.59 (19.78) 86 79.60 (13.23) 59 85.69 (14.33) 56 72.61 (19.11) 88 70.56 (22.56) 113

Production-Independent Composite 70.42 (41.15) 86 86.63 (8.83) 59 90.79 (8.77) 56 81.22 (13.63) 88 80.58 (15.37) 113

Correct Min. Incorrect Writing Seq. 6.62 (13.03) 87 29.98 (15.44) 59 37.91 (18.57) 57 20.06 (17.76) 90 23.90 (22.70) 113

SAT Language subtest 58.91 (18.44) 86 59.66 (17.38) 58 51.79 (20.13) 55

SAT Spelling subtest 56.10 (16.04) 86 52.67 (18.60) 58 48.57 (21.60) 55

Fall Language Arts Grade na 3.37 (.65) 58 3.13 (.90) 55

T & H Analytic Scoring System 5.76 (1.81) 86 8.02 (1.86) 59 8.79 (2.19) 56

CBM Written Language

35School Psychology Review, 2005, Volume 34, No. 1

Preliminary Analyses level. Thus, results indicated that how much

students wrote was not significantly related to

The CBM data were examined for po- their writing accuracy. Furthermore, at the old-

tential differences in scores for students in sec- est grade level (sixth grade), the number of

ond, fourth, and sixth grades and gender. A 2 words students spelled correctly was not sig-

(gender) x 3 (grade level) MANOVA was run nificantly related to the production-indepen-

to conduct these exploratory analyses. The six dent measures. This result suggests that with

CBM scoring indices served as the dependent older students, the spelling measure is not re-

variables. There was no significant interaction lated to measures of writing accuracy. The

between grade and gender, Wilks’s lambda = CMIWS scores, however, do relate well with

.946, p > .05. However, main effects were both the production-dependent and the produc-

found for gender [Wilks’s lambda = .870, F tion-independent indices, indicating that in fact

(5, 191) = 5.70, p < .001] and grade [Wilks’s the CMIWS score is tapping aspects of both

lambda = .435, F (10, 382) = 19.69, p < .001]. fluency and quality.

Results of follow-up univariate analyses for the

gender main effect indicated that boys’ and Relationships Between CBM Scores,

girls’ scores differed significantly on the pro- Published Standardized Scores, Grades,

duction-dependent measures, Fs (1, 195) = and the THASS

16.05, 17.96, 10.30, respectively, ps < .01, but

not on the production-independent or accurate- Correlational analyses between students’

production indices. For all of the production- scores on the curriculum-based scoring indi-

dependent measures, girls had significantly ces and composites (TWW, WSC, CWS, PDC,

higher scores than boys. Follow-up univariate %WSC, %CWS, PIC, and CMIWS), subtest

analyses on grade level revealed that students scores on the SAT, Language Arts grades, and

in different grades had significantly different Total THASS scores were conducted (see Table

scores on all six of the CBM scoring indices, 4). When testing for significant differences

Fs (2, 195) = 69.61, 90.27, 104.26, 46.41, between correlations, Williams’s (1959)

60.49, and 82.66, respectively, ps < .001. Fol- method of testing two dependent correlations

low-up Scheffe contrasts found significant dif- was used.

ferences between students at all three grade SAT Language subtest. In the second-

levels (second, fourth, and sixth) within the six and fourth-grade samples, positive correlations

CBM scoring indices, with two exceptions. No were found between the SAT Language subtest

significant difference was found between scores and most of the CBM measures (r = .34

fourth- and sixth-grade students on the two to .67, p < .01). Non-significant correlations

production-independent measures (%WSC and with the SAT Language score were with TWW

%CWS). Because significant differences were in both Grades 2 and 4, and WSC in Grade 4.

found between grade levels using production- Correlations between the SAT Language score

dependent, accurate-production, and produc- and the production-independent and accurate-

tion-independent CBM scoring indices in the production scores were typically stronger than

majority of circumstances, correlational analy- with the production-dependent scores (see

ses were run at each grade level and with the Table 4). In addition, when examining the

total sample. PDC, PIC, and CMIWS scores, the correlations

To investigate the interrelationships between the SAT Language score and the PIC

among the six CBM written language indices, and CMIWS were higher than the correlation

correlational analyses were conducted (see with the PDC at both levels (Grades 2 and 4).

Table 3). Most of the scores were highly re- Using Williams’s (1959) approach to test for

lated to one another with a few notable excep- differences between dependent correlations, it

tions. The production-independent measures was found that in fourth grade, both the PIC and

(%WSC and %CWS) were not significantly CMIWS scores were more significantly related

related to Total Words Written at any grade to SAT Language than the PDC (ps < .01).

36CBM Written Language

Table 3

Intercorrelations Among the CBM Indices

TWW WSC CWS CMIWS %WSC %CWS

TWW

Grade 2 .95** .73** .30** .12 .09

Grade 4 .99** .89** .68** .24 .23

Grade 6 .99** .82** .52** -.03 -.12

WSC

Grade 2 .86** .53** .41** .33**

Grade 4 .93** .76** .40** .36**

Grade 6 .88** .62** .16 .02

CWS

Grade 2 .88** .59** .72**

Grade 4 .94** .53** .63**

Grade 6 .92** .40** .50**

CMIWS

Grade 2 .73** .92**

Grade 4 .67** .83**

Grade 6 .59** .78**

%WSC

Grade 2 .76**

Grade 4 .79**

Grade 6 .68**

** p < .01.

For the Grade 6 sample, no significant the two production-independent measures

correlations between the SAT Language scores (%WSC and %CWS) and the SAT Spelling

and the production-dependent CBM scores subtest scores were positive and significant (r

(TWW, WSC, and CWS) were found. How- = .43 to .56, p < .01). The correlations between

ever, positive, significant correlations were SAT Spelling and CMIWS were also positive

found between the SAT Language scores and and significant at these two levels (rs = .49, p

the production-independent and accurate-pro- < .01). In addition, there was a significant re-

duction CBM scores (%WSC, %CWS, and lationship between CWS and the SAT Spell-

CMIWS) at r = .47, .52, and .41 (p < .01) (see ing subtest at both grade levels (r = .37 and

Table 4). Furthermore, the correlations between .39, p < .01) (see Table 4). In addition, the

the SAT Language score and the PIC and correlations between SAT Spelling and the two

CMIWS were higher than the correlation with CBM composites (PDC and PIC) and CMIWS

the PDC. Using Williams’s (1959) approach were examined. The PIC and CMIWS scores

to test for differences between the dependent were more strongly related to SAT Spelling

correlations, the PIC and CMIWS were found than the PDC scores at both second and fourth

to have significantly higher correlations with grades. At both grade levels, CMIWS had sig-

SAT Language than the PDC (ps < .001). nificantly higher correlations with SAT Spell-

ing than the PDC (ps < .01). In second grade,

SAT Spelling subtest. Examining the the PIC had significantly higher correlations

Grade 2 and 4 samples, correlations between with SAT Spelling than PDC (p < .01).

37School Psychology Review, 2005, Volume 34, No. 1

Table 4

Correlations Between Scores on Curriculum-Based Measures, the

Stanford Achievement Test, Fall Language Arts Grade, and

Analytic Scoring System Scores

TWW WSC CWS PDC %WSC %CWS PIC CMIWS

SAT Language

Grade 2 .24 38** .57** .42** .46** .59** .58** .62**

Grade 4 .22 .29 .46** .34** .50** .67** .65** .57**

Grade 6 -.14 -.05 .23 .03 .47** .52** .54** .41**

SAT Spelling

Grade 2 .03 .17 .37** .20 .43** .51** .51** .49**

Grade 4 .19 .25 .39** .29 .45** .56** .55** .49**

Grade 6 .02 .13 .33 .17 .52** .44** .48** .44**

Language Arts Grade

Grade 2 —not available—

Grade 4 .45** .51** .59** .53** .53** .58** .60** .61**

Grade 6 .12 .20 .30 .22 .45** .29 .35 .36**

Total THASS Score

Grade 2 .36** .45** .58** .49** .34** .47** .46** .54**

Grade 4 .44** .49** .55** .51** .35** .40** .41** .56**

Grade 6 .16 .24 .46** .31 .39** .49** .50** .56**

Note. PDC = production-dependent CBM composite score; PIC = production-independent CBM composite score; CMIWS

= correct minus incorrect writing sequences.

** p < .01.

In the sixth-grade sample, only the ences between dependent correlations, it was

production-independent and accurate-produc- found that CMIWS was significantly more re-

tion CBM indices (%WSC, %CWS, and lated to SAT Spelling than PDC (p < .01).

CMIWS) were positively and significantly re- Language Arts grades. Within the

lated to the SAT Spelling subtest (r = .52, .44, fourth-grade sample, all correlations between

and .44, p < .01). The PIC and CMIWS scores the CBM scores and students’ fall semester

were also more strongly related to SAT Spell- Language Arts grade were significant (r = .45

ing than the PDC score at this level. Using to .61, p < .01). The strongest correlations with

Williams’s (1959) approach to test for differ- the Language Arts grade were found with

38CBM Written Language

CMIWS, PIC, %CWS, and CWS (see Table counted for in the writing criterion by students’

4). Furthermore, the correlations between stu- scores on the CBM indices ranged from 12%

dents’ Language Arts grade and PIC and to 45%.

CMIWS were higher than the correlation with Regression analyses. Simultaneous re-

PDC, but no significant differences were re- gression analyses were conducted to determine

vealed. which type of curriculum-based measure (pro-

The sixth-grade sample had only two sig- duction-dependent, production-independent, or

nificant correlations between students’ Lan- accurate-production) was most related to stu-

guage Arts grade and the CBM indices. dents’ scores on the THASS. Students’ scores

CMIWS was significant (r = .36, p < .01), as on the variables PDC, PIC, and CMIWS and

was %WSC (r = .45, p < .01). In addition, stu- their grade level were entered simultaneously

dents’ Language Arts grades were more as predictors in a series of four regression

strongly related to the PIC and CMIWS than analyses that included students’ scores on the

the PDC at this level, but these differences were THASS (Total, story-idea, organization-cohe-

not significant. sion, and conventions-mechanics) as depen-

Tindal and Hasbrouck analytic scor- dent variables. The three writing components

ing system. At both grade levels, all of the of the THASS had intercorrelations ranging

CBM scoring indices and composites were sig- from .73 to .88. The four predictor variables

nificantly related to students’ scores on the collectively accounted for 53% of the variance

THASS (r = .34 to .58, p < .01). At the sec- in students’ Total THASS scores (p < .01).

ond-grade level, the production-dependent, CMIWS was a significant predictor (β = .48, p

production-independent, and accurate-produc- < .01). A total of 35% of the variance in stu-

tion measures were all similarly related to the dents’ story-idea scores was accounted for by

THASS. At the fourth-grade level, the produc- the four predictor variables (p < .01), with the

tion-dependent measures were more strongly PDC being a significant predictor (β = .43, p <

related to the THASS than the production-in- .01). Analyses conducted with students’ orga-

dependent measures, but not the accurate-pro- nization-cohesion scores indicated that the

duction index. Further examining the PDC, PDC, PIC, CMIWS, and students’ grade level

PIC, and CMIWS scores, CMIWS was the accounted for 30% of the variance (p < .01),

most strongly related to the THASS, and PIC but none of the individual variables were sig-

was the least related. No significant differences nificant predictors. The four predictor variables

were found. accounted for 62% of the variance in students’

Examining the Grade 6 sample, the only scores on conventions-mechanics (p < .01).

production-dependent scoring index signifi- CMIWS (β = .77, p < .001) and students’ grade

cantly related to the THASS was CWS (r = level (β = .22, p < .01) were both significant

.46, p < .01). Both production-independent predictors.

measures (%WSC and %CWS) were signifi-

Discussion

cantly related to the THASS (r = .39 and .49, p

< .01). Furthermore, the accurate-production The results of the preliminary analyses

index had the strongest relationship to the indicated grade level and gender differences

THASS (r = .56, p < .01). A similar pattern in students’ performance on the CBM written

was seen for the PDC, PIC, and CMIWS expression scoring indices. Regarding gender,

scores. Using Williams’s (1959) approach, girls significantly outperformed boys on all of

CMIWS was found to be significantly more the writing fluency measures, but there were

related to the THASS than the PDC at this no gender differences on the production-inde-

grade level (p < .01). pendent or accurate-production indices. Thus,

Overall, the significant correlations be- girls at all grade levels tended to write more

tween the CBM indices and all other writing and produce more correctly spelled words and

criterion used in the present study ranged from correct writing sequences than boys. However,

.34 to .67. Thus, the amount of variance ac- boys and girls performed similarly on measures

39School Psychology Review, 2005, Volume 34, No. 1

of writing accuracy, indicating that even though rate-production) related to external writing cri-

boys may be less fluent, they are equally ac- teria. The present study found that as grade

curate in their writing. These new findings level increased, fewer of the CBM scoring in-

should be considered carefully by users of dices were significantly correlated with the

CBM writing data, particularly when only flu- criterion measures of SAT subtest scores, lan-

ency indices are being utilized. Because girls guage grades, and scores on the THASS. Spe-

are producing more writing, they will have an cifically, with the early elementary-aged chil-

advantage if being compared to boys on flu- dren (second grade), most of the production-

ency measures. Thus, perhaps separate norms dependent, production-independent, and accu-

should be created for boys and girls. rate-production measures were significantly

When examining grade level, second, related to the writing criteria. However, when

fourth, and sixth graders’ scores were signifi- examining older students’ (sixth grade) scores,

cantly different from one another on all CBM in all but one case only the production-inde-

indices with the exception of fourth and sixth pendent and accurate-production writing mea-

graders not having significantly different sures were significantly related to the other

scores on the production-independent indices criteria. The number of correct writing se-

(%WSC and %CWS). This is further validity quences continued to be significantly related

evidence for these measures in that one would to the criterion measures in sixth grade, but

expect the scores to change as students’ skills the other more “pure” fluency measures (e.g.,

matured, particularly between second and number of words written and words spelled

fourth grades. Additionally, results revealed correctly) were not significantly related to the

that the pure fluency measure (number of criterion measures at the older grade level.

words written) is not related to measures of These findings suggest that as students hit the

writing accuracy at any grade level. The late elementary/middle school grades, teach-

CMIWS scores, however, do seem to be tap- ers and school psychologists should demon-

ping aspects of both writing fluency and accu- strate caution in using CBM to make high

racy, as evidenced by significant correlations stakes educational decisions or to influence

with the production-dependent and production- instruction if only TWW or WSC are being

independent scoring indices. Malecki and used. It appears that examining only the quan-

Jewell (2003) also recently found that CMIWS tity of these students’ writing is not assessing

was significantly related to measures of both the skills being measured by the criterion mea-

writing fluency and accuracy. Thus, as consis- sures. Rather, CWS, %WSC, %CWS, and

tent with best practices, educators need to be CMIWS appear to be the more valid indices at

clear on the purpose of their assessments be- the sixth grade level.

fore choosing which CBM writing indicators A second major finding of the present

are appropriate. Fluency and accuracy scores study was that with few exceptions, a stronger

will be tapping different abilities, particularly relationship was found between production-

at older grade levels. If one wanted a broad independent scoring indices and the writing

score that accounted for both fluency and ac- criteria and between CMIWS and the criteria

curacy, perhaps the CMIWS score would be than with production-dependent scoring indi-

an appropriate choice. ces at all grade levels. This finding suggests

Unlike earlier work performed by Deno that at all grade levels, measures of writing

et al. (1980) and Deno et al. (1982), the present accuracy (production-independent and accu-

study did not find consistent significant rela- rate-production) may be more strongly related

tionships between the production-dependent to students’ performance on other types of writ-

scoring indices and the other criterion measures ing criteria than measures of writing fluency.

for the sixth-grade students. Instead, the present This result is consistent with the work of Tindal

study detected grade level differences with how and Parker (1989), which revealed that pro-

the three types of CBM scores (production- duction-independent scoring indices were more

dependent, production-independent, and accu- closely related to teachers’ holistic ratings than

40CBM Written Language

production-dependent scoring indices. The similarity to state-wide writing assessments

present study extends the work of Tindal and currently being used. The current study found

Parker by including both direct and indirect that CMIWS was a significant predictor of stu-

types of writing criteria, by using three types dents’ Total THASS and conventions-mechan-

of CBM writing indices, and by finding a grade ics scores, suggesting that a measure that uti-

level trend in how CBM scores relate to the lizes the combination of writing fluency and

writing criteria. The results of the present study accuracy best relates to students’ overall writ-

indicate that at the older grade levels especially, ing score and their usage of mechanics. This

the production-dependent measures may not result is further evidence for the validity of the

be significantly related to students’ perfor- CMIWS score. In addition, students’ grade

mance on other criteria. level was also a significant predictor of their

This grade level trend in how measures score on the conventions-mechanics compo-

of writing fluency versus measures of writing nent of the THASS. This finding indicates that

accuracy relate to students’ scores on other the grade level of the students affected their

writing criteria suggests differences in the va- performance in writing mechanics. In other

lidity of the three types of CBM scores at dif- words, as children advance through school,

ferent grade levels. For example, it appears that their skills in writing conventions and mechan-

the pure measures of writing fluency (number ics also progress. The same is true for students’

of words written and number of words spelled scores on the various CBM indices.

correctly) are not appropriate to use at older It should be noted that although the cor-

grade levels. On the other hand, at younger relations among the CBM production-indepen-

grade levels, these fluency measures are related dent and accurate-production indices for all

to writing criteria such as students’ Language grade levels were significantly related to the

Arts grade and their scores on an analytic scor- national standardized achievement test scores

ing system. Therefore, when working with stu- as well as to the THASS scores, the correla-

dents in older grades, using production-inde- tions ranged from .43 to .67 for the SAT scores,

pendent and accurate-production measures and from .34 to .56 for the THASSS scores.

would provide information that is more closely These correlations are moderate to strong, how-

tied to other writing criteria, such as academic ever, they are not overwhelming. In addition,

achievement measures, than measures of writ- the correlations are similar in strength between

ing fluency. The importance of using measures the CBM indices and SAT scores versus the

of writing accuracy with older students could THASS scores, meaning that no conclusions

mirror the increased emphasis that both stu- can be drawn about the merits of the SAT ver-

dents and teachers place on writing quality as sus the THASS as related to CBM indices.

students progress through school. Younger stu- Overall, the results of the current study add to

dents are still working on becoming fluent our understanding of CBM writing indices, but

writers, whereas older students begin to pay help to emphasize that we should always

more attention to the quality of their writing. choose the assessment that most closely relates

Thus, measures of writing quality are more to the skills needing to be assessed and that is

accurate in their assessment of older students’ most valid for our intended purpose. These

writing abilities. decisions should be made on a student-by-stu-

The results of the present study indicated dent, and purpose-by-purpose basis.

that collectively, the three types of curriculum- Limitations of the Study

based measures (production-dependent, pro-

duction-independent, and accurate-produc- A limitation of the present study was that

tion), were significantly related to the four data regarding procedural integrity were not

THASS scores (total, story-idea, organization- collected. Teachers were given explicit direc-

cohesion, and conventions-mechanics). This tions on how to administer the CBM writing

finding is important because the THASS was probes to their class in both verbal and written

selected as a criterion measure because of its formats. However, after the administration of

41School Psychology Review, 2005, Volume 34, No. 1

the CBM probes, teachers were not asked if In conclusion, examining the relation-

the proper procedure was followed. After ex- ship between students’ scores on CBM, a pub-

amining the writing samples generated by the lished standardized achievement test, class-

students, it appeared as if the standardized pro- room grades, and an analytic scoring system

cedure had been followed by all teachers, but revealed consistently stronger relationships

this can not be confirmed without procedural between production-independent and accurate-

integrity information. production CBM scoring measures with the

A second limitation was that none of the other writing criteria than with production-

writing samples used in the present study were dependent CBM scoring measures. With stu-

scored by more than one rater. The scorers dents in older grades, it was primarily the

underwent an intensive training process and production-independent and accurate-produc-

practiced scoring on 160 “example” writing tion measures that were significantly related

samples from various grade levels. However, to the criterion measures. With the early el-

only one person scored each writing sample ementary students, all three types of CBM

used in the present study and therefore scores were typically related to the criterion

interrater reliability was not calculated for the measures. These results illustrated a grade level

actual study’s writing samples. trend in how measures of writing fluency ver-

sus measures of writing accuracy related to

Future Research Directions

other indicators of students’ writing perfor-

The three types of curriculum-based mance.

measures investigated in the present study (pro- Finally, the results from this study em-

duction-dependent, production-independent, phasize the importance of making informed

and accurate-production) have been shown to decisions about what types of assessment mea-

have adequate evidence for their reliable and sures to use when making educational deci-

valid use under typical circumstances (Deno sions for students. The present study, along

et al., 1980; Espin et al., 2000; Marston & with previous literature (e.g., Espin et al., 2000;

Deno, 1981; Tindal & Parker, 1989; Videen et Tindal & Parker, 1989), suggests that the age,

al., 1982). The present study was the first to skill level, and gender of the student, the pur-

examine these three types of CBM indices si- pose of the testing (e.g., progress monitoring,

multaneously and found a grade level trend in making comparisons across students, com-

how these indices relate to external writing municating results with parents, eligibility

criteria. Future research needs to elaborate decisions), and the goal(s) of the assessment

upon the grade level trend that exists within are all factors that must be taken into ac-

the area of CBM writing to further delineate count. If a teacher wants to monitor the in-

which type of CBM index is most appropriate crease in the amount of writing a student can

to use with different ages of students. Another produce regardless of accuracy, Total Words

possible direction for future research is to ex- Written would be suitable. If a teacher wants

plore the gender differences found in the cur- to examine only the accuracy of writing in

rent study between boys’ and girls’ writing flu- terms of grammar, punctuation, spelling, or

ency abilities. If this distinction between the syntax, the percentage indices may be ap-

genders is replicated, research could further propriate, especially with older students.

examine the practical implications of this dif- Furthermore, the new indicator, Correct Mi-

ference. Furthermore, research on the utility nus Incorrect Writing Sequences, may serve

of CBM writing measures for a variety of aca- as an appropriate measure of students’ overall

demic purposes, such as progress monitoring writing proficiency that has shown convinc-

and eligibility decisions, needs to be conducted. ing reliability and validity evidence. Further

When research examines the application of research can continue to add to such recom-

CBM written expression scoring indices, dif- mendations and make educators’ decision mak-

ferent grade levels should be used to further ing in choosing appropriate CBM writing in-

investigate possible developmental trends. dices more explicit.

42CBM Written Language

References Based Measurement: Assessing Special Children (pp.

18-78). New York: Guilford Press.

Deno, S. L., Fuchs, L.S., Marston, D., & Shin, J. (2001). Marston, D., & Deno, S. (1981). The reliability of simple,

Using curriculum-based measurement to establish direct measures of written expression (Rep. No. 50).

growth standards for students with learning disabili- Minneapolis: University of Minnesota, Institute for

ties. School Psychology Review, 30, 507-524. Research on Learning Disabilities.

Deno, S. L., Marston, D., & Mirkin, P. (1982). Valid mea- Marston, D., Fuchs, L. S., & Deno, S. L. (1986). Measur-

surement procedures for continuous evaluation of writ- ing pupil progress: A comparison of standardized

ten expression. Exceptional Children, 48, 368-371. achievement tests and curriculum-related measures.

Deno, S. L., Mirkin, P. K., & Marston, D. (1980). Re- Diagnostique, 11, 77-90.

lationships among simple measures of written ex- Moran, M. R. (1987). Options for written language as-

pression and performance on standardized achieve- sessment. Focus on Exceptional Children, 19, 1-10.

ment tests (Rep. No. 22). Minneapolis: University Parker, R., Tindal, G., & Hasbrouck, J. (1991). Count-

of Minnesota, Institute for Research on Learning able indices of writing quality: Their suitability for

Disabilities. screening-eligibility decisions. Exceptionality, 2,

Elliott, S. N., & Fuchs, L. S. (1997). The utility of 1-17.

curriculum-based measurement and performance Shinn, M. R. (1995). Best practices in curriculum-

assessment as alternatives to traditional intelligence based measurement and its use in a problem-solv-

and achievement tests. School Psychology Review, ing model. In A. Thomas & J. Grimes (Eds.), Best

26, 224-233. practices in school psychology—III (pp. 547–567).

Espin, C., Shin, J., Deno, S.L., Skare, S., Robinson, S., & Washington, DC: National Association of School

Benner, B. (2000). Identifying indicators of written Psychologists.

expression proficiency for middle school students. Shinn, M. R., Ysseldyke, J., Deno, S., & Tindal, G. (1982).

Journal of Special Education, 34, 140-153. A comparison of psychometric and functional differ-

Fuchs, L. S., & Fuchs, D. (1997). Use of curriculum-based ences between students labeled learning disabled and

measurement in identifying students with disabilities. low achieving (Rep. No. 71). Minneapolis: University

Focus on Exceptional Children, 30(3), 1-16. of Minnesota, Institute for Research on Learning Dis-

Fuchs, L. S., Fuchs, D., & Hamlett, C. L. (1990). Curricu- abilities.

lum-based measurement: A standardized, long-term Stiggins, R. J. (1982). A comparison of direct and indirect

goal approach to monitoring student progress. Aca- writing assessment methods. Research in the Teach-

demic Therapy, 25, 615-632. ing of English, 16, 101-114.

Hammill, D. D., & Larsen, S. C. (1978). The Test of Writ- Tindal, G., & Hasbrouck, J. (1991). Analyzing student

ten Language. Austin, TX: Pro-Ed. writing to develop instructional strategies. Learning

Harcourt Brace Educational Measurement. (1997). Disabilities Research and Practice, 6, 237-245.

Stanford Achievement Test, Ninth Edition. San Anto- Tindal, G., & Parker, R. (1989). Assessment of written

nio, TX: Harcourt Brace Educational Measurement. expression for students in compensatory and special

Knoff, H. M., & Dean, K. R. (1994). Curriculum-based education programs. The Journal of Special Educa-

measurement of at-risk students’ reading skills: A pre- tion, 23, 169-183.

liminary investigation of bias. Psychological Reports, Tindal, G., & Parker, R. (1991). Identifying measures for

75, 1355-1360. evaluating written expression. Learning Disabilities

Kranzler, J. H., Miller, M. D., & Jordan, L. (1999). An Research and Practice, 6, 211-218.

examination of racial/ethnic and gender bias on cur- Videen, J., Deno, S., & Marston, D. (1982). Correct word

riculum-based measurement of reading. School Psy- sequences: A valid indicator of proficiency in written

chology Quarterly, 14, 327-342. expression (Rep. No. 84). Minneapolis: University of

Madden, R., Gardner, E. F., Rudman, H. C., Karlsen, B., Minnesota, Institute for Research on Learning Disabili-

& Merwin, J. C. (1978). Stanford Achievement Test. ties.

New York: Harcourt Brace Jovanovich. Watkinson, J. T., & Lee, S. W. (1992). Curriculum-based

Malecki, C. K., & Jewell, J. (2003). Developmental, gen- measures of written expression for learning-disabled

der, and practical considerations in scoring curriculum- and nondisabled students. Psychology in the Schools,

based measurement writing probes. Psychology in the 29, 184-191.

Schools, 40, 379-390. Williams, E. J. (1959). The comparison of regression vari-

Marston, D. B. (1989). A curriculum-based measurement ables. Journal of the Royal Statistical Society (Series

approach to assessing academic performance: What it B), 21, 396-399.

is and why do it. In M. R. Shinn (Ed.), Curriculum-

Jennifer Jewell received her PhD in Psychology (School specialization) at Northern Illi-

nois University in DeKalb. She is a school psychologist with Woodford County Special

Education Association in Illinois and is an adjunct professor in the Psychology Depart-

ment at Bradley University in Peoria, IL. Her primary research area is curriculum-based

measurement in written language.

43You can also read